https://rancher.com/docs/rke/latest/en/installation/

https://rancher.com/docs/rke/latest/en/example-yamls/

https://kubernetes.io/docs/tasks/tools/install-kubectl/

https://rancher.com/docs/rke/latest/en/kubeconfig/

节点主机名及IP信息

167.172.114.10 10.138.218.141 rancher-01 159.65.106.35 10.138.218.144 rancher-02 159.65.102.101 10.138.218.146 rancher-03

节点基础环境配置

sed -i 's/^SELINUX=enforcing$/SELINUX=disabled/' /etc/selinux/config; echo "167.172.114.10 rancher-01">>/etc/hosts; echo "159.65.106.35 rancher-02">>/etc/hosts; echo "159.65.102.101 rancher-03">>/etc/hosts; init 6

节点Docker运行环境配置

curl https://releases.rancher.com/install-docker/18.09.sh | sh; useradd rancher; usermod -aG docker rancher echo "rancherpwd" | passwd --stdin rancher

为节点生成并配置密钥对

生成密钥对

[root@rancher-01 ~]# ssh-keygen Generating public/private rsa key pair. Enter file in which to save the key (/root/.ssh/id_rsa): Enter passphrase (empty for no passphrase): Enter same passphrase again: Your identification has been saved in /root/.ssh/id_rsa. Your public key has been saved in /root/.ssh/id_rsa.pub. The key fingerprint is: SHA256:sfL3YnyrNZsioS3ThuOTRME7AIyLxm4Yq396LAaeQOY root@rancher-01 The key's randomart image is: +---[RSA 2048]----+ | o.. . | |. . . o | |o. . o. | |+= + o | |Bo ...S | |=E .o. | |=... . *.o. o | |.oo + O =.=o.+ | | oo= ..* o.==. | +----[SHA256]-----+ [root@rancher-01 ~]#

分发密钥对

[root@rancher-01 ~]# ssh-copy-id -i .ssh/id_rsa.pub rancher@rancher-01 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub" The authenticity of host 'rancher-01 (::1)' can't be established. ECDSA key fingerprint is SHA256:NTaQJddPf6G3saQd2d6iQnF+Txp6YpkwhyiNuSImgNg. ECDSA key fingerprint is MD5:ee:13:1b:70:95:ab:28:30:20:38:64:69:44:bd:1a:4a. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys rancher@rancher-01's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'rancher@rancher-01'" and check to make sure that only the key(s) you wanted were added. [root@rancher-01 ~]# ssh-copy-id -i .ssh/id_rsa.pub rancher@rancher-02 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub" The authenticity of host 'rancher-02 (159.65.106.35)' can't be established. ECDSA key fingerprint is SHA256:bZ2ZGx9IIzSGC2fkMEtWULbau8RcAeOOCwh+4QOMU2g. ECDSA key fingerprint is MD5:48:d9:55:3c:9e:91:8a:47:c1:1a:3e:77:c7:f2:21:a7. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys rancher@rancher-02's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'rancher@rancher-02'" and check to make sure that only the key(s) you wanted were added. [root@rancher-01 ~]# ssh-copy-id -i .ssh/id_rsa.pub rancher@rancher-03 /usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: ".ssh/id_rsa.pub" The authenticity of host 'rancher-03 (159.65.102.101)' can't be established. ECDSA key fingerprint is SHA256:74nZvSQC34O7LrXlRzu/k0MsQzFcucn/n6c8X9CREwM. ECDSA key fingerprint is MD5:37:2c:97:0e:d2:8e:4b:f5:7e:c5:b2:34:b5:f2:86:60. Are you sure you want to continue connecting (yes/no)? yes /usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed /usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys rancher@rancher-03's password: Number of key(s) added: 1 Now try logging into the machine, with: "ssh 'rancher@rancher-03'" and check to make sure that only the key(s) you wanted were added. [root@rancher-01 ~]#

下载安装RKE(Rancher Kubernetes Engine)

[root@rancher-01 ~]# yum -y install wget [root@rancher-01 ~]# wget https://github.com/rancher/rke/releases/download/v1.1.2/rke_linux-amd64 [root@rancher-01 ~]# ls anaconda-ks.cfg original-ks.cfg rke_linux-arm64 [root@rancher-01 ~]# mv rke_linux-amd64 /usr/bin/rke [root@rancher-01 ~]# chmod +x /usr/bin/rke

查看RKE版本信息

[root@rancher-01 ~]# rke --version rke version v1.1.2 [root@rancher-01 ~]#

生成RKE集权配置文件(OpenSSH Server版本6.7及以上,禁止使用root用户,需指定docker socket路径/var/run/docker.sock)

[root@rancher-01 ~]# rke config --name cluster.yml [+] Cluster Level SSH Private Key Path [~/.ssh/id_rsa]: [+] Number of Hosts [1]: 3 [+] SSH Address of host (1) [none]: 167.172.114.10 [+] SSH Port of host (1) [22]: [+] SSH Private Key Path of host (167.172.114.10) [none]: [-] You have entered empty SSH key path, trying fetch from SSH key parameter [+] SSH Private Key of host (167.172.114.10) [none]: ^C [root@rancher-01 ~]# rke config --name cluster.yml [+] Cluster Level SSH Private Key Path [~/.ssh/id_rsa]: [+] Number of Hosts [1]: 3 [+] SSH Address of host (1) [none]: 167.172.114.10 [+] SSH Port of host (1) [22]: [+] SSH Private Key Path of host (167.172.114.10) [none]: ~/.ssh/id_rsa [+] SSH User of host (167.172.114.10) [ubuntu]: rancher [+] Is host (167.172.114.10) a Control Plane host (y/n)? [y]: [+] Is host (167.172.114.10) a Worker host (y/n)? [n]: [+] Is host (167.172.114.10) an etcd host (y/n)? [n]: y [+] Override Hostname of host (167.172.114.10) [none]: rancher-01 [+] Internal IP of host (167.172.114.10) [none]: 10.138.218.141 [+] Docker socket path on host (167.172.114.10) [/var/run/docker.sock]: [+] SSH Address of host (2) [none]: 159.65.106.35 [+] SSH Port of host (2) [22]: [+] SSH Private Key Path of host (159.65.106.35) [none]: ~/.ssh/id_rsa [+] SSH User of host (159.65.106.35) [ubuntu]: rancher [+] Is host (159.65.106.35) a Control Plane host (y/n)? [y]: n [+] Is host (159.65.106.35) a Worker host (y/n)? [n]: y [+] Is host (159.65.106.35) an etcd host (y/n)? [n]: [+] Override Hostname of host (159.65.106.35) [none]: rancher-02 [+] Internal IP of host (159.65.106.35) [none]: 10.138.218.144 [+] Docker socket path on host (159.65.106.35) [/var/run/docker.sock]: [+] SSH Address of host (3) [none]: 159.65.102.101 [+] SSH Port of host (3) [22]: [+] SSH Private Key Path of host (159.65.102.101) [none]: ~/.ssh/id_rsa [+] SSH User of host (159.65.102.101) [ubuntu]: rancher [+] Is host (159.65.102.101) a Control Plane host (y/n)? [y]: n [+] Is host (159.65.102.101) a Worker host (y/n)? [n]: y [+] Is host (159.65.102.101) an etcd host (y/n)? [n]: [+] Override Hostname of host (159.65.102.101) [none]: rancher-03 [+] Internal IP of host (159.65.102.101) [none]: 10.138.218.146 [+] Docker socket path on host (159.65.102.101) [/var/run/docker.sock]: [+] Network Plugin Type (flannel, calico, weave, canal) [canal]: [+] Authentication Strategy [x509]: [+] Authorization Mode (rbac, none) [rbac]: [+] Kubernetes Docker image [rancher/hyperkube:v1.17.6-rancher2]: [+] Cluster domain [cluster.local]: [+] Service Cluster IP Range [10.43.0.0/16]: [+] Enable PodSecurityPolicy [n]: [+] Cluster Network CIDR [10.42.0.0/16]: [+] Cluster DNS Service IP [10.43.0.10]: [+] Add addon manifest URLs or YAML files [no]: [root@rancher-01 ~]#

查看RKE集群配置文件

[root@rancher-01 ~]# cat cluster.yml # If you intened to deploy Kubernetes in an air-gapped environment, # please consult the documentation on how to configure custom RKE images. nodes: - address: 167.172.114.10 port: "22" internal_address: 10.138.218.141 role: - controlplane - etcd hostname_override: rancher-01 user: rancher docker_socket: /var/run/docker.sock ssh_key: "" ssh_key_path: ~/.ssh/id_rsa ssh_cert: "" ssh_cert_path: "" labels: {} taints: [] - address: 159.65.106.35 port: "22" internal_address: 10.138.218.144 role: - worker hostname_override: rancher-02 user: rancher docker_socket: /var/run/docker.sock ssh_key: "" ssh_key_path: ~/.ssh/id_rsa ssh_cert: "" ssh_cert_path: "" labels: {} taints: [] - address: 159.65.102.101 port: "22" internal_address: 10.138.218.146 role: - worker hostname_override: rancher-03 user: rancher docker_socket: /var/run/docker.sock ssh_key: "" ssh_key_path: ~/.ssh/id_rsa ssh_cert: "" ssh_cert_path: "" labels: {} taints: [] services: etcd: image: "" extra_args: {} extra_binds: [] extra_env: [] external_urls: [] ca_cert: "" cert: "" key: "" path: "" uid: 0 gid: 0 snapshot: null retention: "" creation: "" backup_config: null kube-api: image: "" extra_args: {} extra_binds: [] extra_env: [] service_cluster_ip_range: 10.43.0.0/16 service_node_port_range: "" pod_security_policy: false always_pull_images: false secrets_encryption_config: null audit_log: null admission_configuration: null event_rate_limit: null kube-controller: image: "" extra_args: {} extra_binds: [] extra_env: [] cluster_cidr: 10.42.0.0/16 service_cluster_ip_range: 10.43.0.0/16 scheduler: image: "" extra_args: {} extra_binds: [] extra_env: [] kubelet: image: "" extra_args: {} extra_binds: [] extra_env: [] cluster_domain: cluster.local infra_container_image: "" cluster_dns_server: 10.43.0.10 fail_swap_on: false generate_serving_certificate: false kubeproxy: image: "" extra_args: {} extra_binds: [] extra_env: [] network: plugin: canal options: {} mtu: 0 node_selector: {} update_strategy: null authentication: strategy: x509 sans: [] webhook: null addons: "" addons_include: [] system_images: etcd: rancher/coreos-etcd:v3.4.3-rancher1 alpine: rancher/rke-tools:v0.1.56 nginx_proxy: rancher/rke-tools:v0.1.56 cert_downloader: rancher/rke-tools:v0.1.56 kubernetes_services_sidecar: rancher/rke-tools:v0.1.56 kubedns: rancher/k8s-dns-kube-dns:1.15.0 dnsmasq: rancher/k8s-dns-dnsmasq-nanny:1.15.0 kubedns_sidecar: rancher/k8s-dns-sidecar:1.15.0 kubedns_autoscaler: rancher/cluster-proportional-autoscaler:1.7.1 coredns: rancher/coredns-coredns:1.6.5 coredns_autoscaler: rancher/cluster-proportional-autoscaler:1.7.1 nodelocal: rancher/k8s-dns-node-cache:1.15.7 kubernetes: rancher/hyperkube:v1.17.6-rancher2 flannel: rancher/coreos-flannel:v0.11.0-rancher1 flannel_cni: rancher/flannel-cni:v0.3.0-rancher6 calico_node: rancher/calico-node:v3.13.4 calico_cni: rancher/calico-cni:v3.13.4 calico_controllers: rancher/calico-kube-controllers:v3.13.4 calico_ctl: rancher/calico-ctl:v3.13.4 calico_flexvol: rancher/calico-pod2daemon-flexvol:v3.13.4 canal_node: rancher/calico-node:v3.13.4 canal_cni: rancher/calico-cni:v3.13.4 canal_flannel: rancher/coreos-flannel:v0.11.0 canal_flexvol: rancher/calico-pod2daemon-flexvol:v3.13.4 weave_node: weaveworks/weave-kube:2.6.4 weave_cni: weaveworks/weave-npc:2.6.4 pod_infra_container: rancher/pause:3.1 ingress: rancher/nginx-ingress-controller:nginx-0.32.0-rancher1 ingress_backend: rancher/nginx-ingress-controller-defaultbackend:1.5-rancher1 metrics_server: rancher/metrics-server:v0.3.6 windows_pod_infra_container: rancher/kubelet-pause:v0.1.3 ssh_key_path: ~/.ssh/id_rsa ssh_cert_path: "" ssh_agent_auth: false authorization: mode: rbac options: {} ignore_docker_version: false kubernetes_version: "" private_registries: [] ingress: provider: "" options: {} node_selector: {} extra_args: {} dns_policy: "" extra_envs: [] extra_volumes: [] extra_volume_mounts: [] update_strategy: null cluster_name: "" cloud_provider: name: "" prefix_path: "" addon_job_timeout: 0 bastion_host: address: "" port: "" user: "" ssh_key: "" ssh_key_path: "" ssh_cert: "" ssh_cert_path: "" monitoring: provider: "" options: {} node_selector: {} update_strategy: null replicas: null restore: restore: false snapshot_name: "" dns: null [root@rancher-01 ~]#

执行集群部署

[root@rancher-01 ~]# rke up --config cluster.yml INFO[0000] Running RKE version: v1.1.2 INFO[0000] Initiating Kubernetes cluster INFO[0000] [dialer] Setup tunnel for host [159.65.102.101] INFO[0000] [dialer] Setup tunnel for host [159.65.106.35] INFO[0000] [dialer] Setup tunnel for host [167.172.114.10] INFO[0000] Checking if container [cluster-state-deployer] is running on host [167.172.114.10], try #1 INFO[0000] Pulling image [rancher/rke-tools:v0.1.56] on host [167.172.114.10], try #1 INFO[0005] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0005] Starting container [cluster-state-deployer] on host [167.172.114.10], try #1 INFO[0005] [state] Successfully started [cluster-state-deployer] container on host [167.172.114.10] INFO[0006] Checking if container [cluster-state-deployer] is running on host [159.65.106.35], try #1 INFO[0006] Pulling image [rancher/rke-tools:v0.1.56] on host [159.65.106.35], try #1 INFO[0012] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0012] Starting container [cluster-state-deployer] on host [159.65.106.35], try #1 INFO[0012] [state] Successfully started [cluster-state-deployer] container on host [159.65.106.35] INFO[0012] Checking if container [cluster-state-deployer] is running on host [159.65.102.101], try #1 INFO[0012] Pulling image [rancher/rke-tools:v0.1.56] on host [159.65.102.101], try #1 INFO[0020] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0020] Starting container [cluster-state-deployer] on host [159.65.102.101], try #1 INFO[0021] [state] Successfully started [cluster-state-deployer] container on host [159.65.102.101] INFO[0021] [certificates] Generating CA kubernetes certificates INFO[0021] [certificates] Generating Kubernetes API server aggregation layer requestheader client CA certificates INFO[0021] [certificates] GenerateServingCertificate is disabled, checking if there are unused kubelet certificates INFO[0021] [certificates] Generating Kubernetes API server certificates INFO[0022] [certificates] Generating Service account token key INFO[0022] [certificates] Generating Kube Controller certificates INFO[0022] [certificates] Generating Kube Scheduler certificates INFO[0022] [certificates] Generating Kube Proxy certificates INFO[0022] [certificates] Generating Node certificate INFO[0022] [certificates] Generating admin certificates and kubeconfig INFO[0022] [certificates] Generating Kubernetes API server proxy client certificates INFO[0023] [certificates] Generating kube-etcd-10-138-218-141 certificate and key INFO[0023] Successfully Deployed state file at [./cluster.rkestate] INFO[0023] Building Kubernetes cluster INFO[0023] [dialer] Setup tunnel for host [159.65.102.101] INFO[0023] [dialer] Setup tunnel for host [167.172.114.10] INFO[0023] [dialer] Setup tunnel for host [159.65.106.35] INFO[0023] [network] Deploying port listener containers INFO[0023] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0023] Starting container [rke-etcd-port-listener] on host [167.172.114.10], try #1 INFO[0024] [network] Successfully started [rke-etcd-port-listener] container on host [167.172.114.10] INFO[0024] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0024] Starting container [rke-cp-port-listener] on host [167.172.114.10], try #1 INFO[0024] [network] Successfully started [rke-cp-port-listener] container on host [167.172.114.10] INFO[0024] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0024] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0024] Starting container [rke-worker-port-listener] on host [159.65.102.101], try #1 INFO[0024] Starting container [rke-worker-port-listener] on host [159.65.106.35], try #1 INFO[0024] [network] Successfully started [rke-worker-port-listener] container on host [159.65.102.101] INFO[0024] [network] Successfully started [rke-worker-port-listener] container on host [159.65.106.35] INFO[0024] [network] Port listener containers deployed successfully INFO[0024] [network] Running control plane -> etcd port checks INFO[0024] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0024] Starting container [rke-port-checker] on host [167.172.114.10], try #1 INFO[0025] [network] Successfully started [rke-port-checker] container on host [167.172.114.10] INFO[0025] Removing container [rke-port-checker] on host [167.172.114.10], try #1 INFO[0025] [network] Running control plane -> worker port checks INFO[0025] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0025] Starting container [rke-port-checker] on host [167.172.114.10], try #1 INFO[0025] [network] Successfully started [rke-port-checker] container on host [167.172.114.10] INFO[0025] Removing container [rke-port-checker] on host [167.172.114.10], try #1 INFO[0025] [network] Running workers -> control plane port checks INFO[0025] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0025] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0025] Starting container [rke-port-checker] on host [159.65.106.35], try #1 INFO[0025] Starting container [rke-port-checker] on host [159.65.102.101], try #1 INFO[0025] [network] Successfully started [rke-port-checker] container on host [159.65.106.35] INFO[0025] Removing container [rke-port-checker] on host [159.65.106.35], try #1 INFO[0026] [network] Successfully started [rke-port-checker] container on host [159.65.102.101] INFO[0026] Removing container [rke-port-checker] on host [159.65.102.101], try #1 INFO[0026] [network] Checking KubeAPI port Control Plane hosts INFO[0026] [network] Removing port listener containers INFO[0026] Removing container [rke-etcd-port-listener] on host [167.172.114.10], try #1 INFO[0026] [remove/rke-etcd-port-listener] Successfully removed container on host [167.172.114.10] INFO[0026] Removing container [rke-cp-port-listener] on host [167.172.114.10], try #1 INFO[0026] [remove/rke-cp-port-listener] Successfully removed container on host [167.172.114.10] INFO[0026] Removing container [rke-worker-port-listener] on host [159.65.106.35], try #1 INFO[0026] Removing container [rke-worker-port-listener] on host [159.65.102.101], try #1 INFO[0026] [remove/rke-worker-port-listener] Successfully removed container on host [159.65.102.101] INFO[0026] [remove/rke-worker-port-listener] Successfully removed container on host [159.65.106.35] INFO[0026] [network] Port listener containers removed successfully INFO[0026] [certificates] Deploying kubernetes certificates to Cluster nodes INFO[0026] Checking if container [cert-deployer] is running on host [159.65.106.35], try #1 INFO[0026] Checking if container [cert-deployer] is running on host [159.65.102.101], try #1 INFO[0026] Checking if container [cert-deployer] is running on host [167.172.114.10], try #1 INFO[0026] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0026] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0026] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0026] Starting container [cert-deployer] on host [167.172.114.10], try #1 INFO[0026] Starting container [cert-deployer] on host [159.65.106.35], try #1 INFO[0026] Starting container [cert-deployer] on host [159.65.102.101], try #1 INFO[0027] Checking if container [cert-deployer] is running on host [167.172.114.10], try #1 INFO[0027] Checking if container [cert-deployer] is running on host [159.65.106.35], try #1 INFO[0027] Checking if container [cert-deployer] is running on host [159.65.102.101], try #1 INFO[0032] Checking if container [cert-deployer] is running on host [167.172.114.10], try #1 INFO[0032] Removing container [cert-deployer] on host [167.172.114.10], try #1 INFO[0032] Checking if container [cert-deployer] is running on host [159.65.106.35], try #1 INFO[0032] Removing container [cert-deployer] on host [159.65.106.35], try #1 INFO[0032] Checking if container [cert-deployer] is running on host [159.65.102.101], try #1 INFO[0032] Removing container [cert-deployer] on host [159.65.102.101], try #1 INFO[0032] [reconcile] Rebuilding and updating local kube config INFO[0032] Successfully Deployed local admin kubeconfig at [./kube_config_cluster.yml] INFO[0032] [certificates] Successfully deployed kubernetes certificates to Cluster nodes INFO[0032] [file-deploy] Deploying file [/etc/kubernetes/audit-policy.yaml] to node [167.172.114.10] INFO[0032] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0032] Starting container [file-deployer] on host [167.172.114.10], try #1 INFO[0032] Successfully started [file-deployer] container on host [167.172.114.10] INFO[0032] Waiting for [file-deployer] container to exit on host [167.172.114.10] INFO[0032] Waiting for [file-deployer] container to exit on host [167.172.114.10] INFO[0032] Container [file-deployer] is still running on host [167.172.114.10] INFO[0033] Waiting for [file-deployer] container to exit on host [167.172.114.10] INFO[0033] Removing container [file-deployer] on host [167.172.114.10], try #1 INFO[0033] [remove/file-deployer] Successfully removed container on host [167.172.114.10] INFO[0033] [/etc/kubernetes/audit-policy.yaml] Successfully deployed audit policy file to Cluster control nodes INFO[0033] [reconcile] Reconciling cluster state INFO[0033] [reconcile] This is newly generated cluster INFO[0033] Pre-pulling kubernetes images INFO[0033] Pulling image [rancher/hyperkube:v1.17.6-rancher2] on host [167.172.114.10], try #1 INFO[0033] Pulling image [rancher/hyperkube:v1.17.6-rancher2] on host [159.65.102.101], try #1 INFO[0033] Pulling image [rancher/hyperkube:v1.17.6-rancher2] on host [159.65.106.35], try #1 INFO[0065] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0071] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.106.35] INFO[0080] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.102.101] INFO[0080] Kubernetes images pulled successfully INFO[0080] [etcd] Building up etcd plane.. INFO[0080] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0080] Starting container [etcd-fix-perm] on host [167.172.114.10], try #1 INFO[0081] Successfully started [etcd-fix-perm] container on host [167.172.114.10] INFO[0081] Waiting for [etcd-fix-perm] container to exit on host [167.172.114.10] INFO[0081] Waiting for [etcd-fix-perm] container to exit on host [167.172.114.10] INFO[0081] Container [etcd-fix-perm] is still running on host [167.172.114.10] INFO[0082] Waiting for [etcd-fix-perm] container to exit on host [167.172.114.10] INFO[0082] Removing container [etcd-fix-perm] on host [167.172.114.10], try #1 INFO[0082] [remove/etcd-fix-perm] Successfully removed container on host [167.172.114.10] INFO[0082] Pulling image [rancher/coreos-etcd:v3.4.3-rancher1] on host [167.172.114.10], try #1 INFO[0085] Image [rancher/coreos-etcd:v3.4.3-rancher1] exists on host [167.172.114.10] INFO[0085] Starting container [etcd] on host [167.172.114.10], try #1 INFO[0086] [etcd] Successfully started [etcd] container on host [167.172.114.10] INFO[0086] [etcd] Running rolling snapshot container [etcd-snapshot-once] on host [167.172.114.10] INFO[0086] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0086] Starting container [etcd-rolling-snapshots] on host [167.172.114.10], try #1 INFO[0086] [etcd] Successfully started [etcd-rolling-snapshots] container on host [167.172.114.10] INFO[0091] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0091] Starting container [rke-bundle-cert] on host [167.172.114.10], try #1 INFO[0091] [certificates] Successfully started [rke-bundle-cert] container on host [167.172.114.10] INFO[0091] Waiting for [rke-bundle-cert] container to exit on host [167.172.114.10] INFO[0091] Container [rke-bundle-cert] is still running on host [167.172.114.10] INFO[0092] Waiting for [rke-bundle-cert] container to exit on host [167.172.114.10] INFO[0092] [certificates] successfully saved certificate bundle [/opt/rke/etcd-snapshots//pki.bundle.tar.gz] on host [167.172.114.10] INFO[0092] Removing container [rke-bundle-cert] on host [167.172.114.10], try #1 INFO[0092] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0092] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0093] [etcd] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0093] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0093] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0093] [etcd] Successfully started etcd plane.. Checking etcd cluster health INFO[0093] [controlplane] Building up Controller Plane.. INFO[0093] Checking if container [service-sidekick] is running on host [167.172.114.10], try #1 INFO[0093] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0093] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0093] Starting container [kube-apiserver] on host [167.172.114.10], try #1 INFO[0093] [controlplane] Successfully started [kube-apiserver] container on host [167.172.114.10] INFO[0093] [healthcheck] Start Healthcheck on service [kube-apiserver] on host [167.172.114.10] INFO[0098] [healthcheck] service [kube-apiserver] on host [167.172.114.10] is healthy INFO[0098] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0098] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0099] [controlplane] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0099] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0099] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0099] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0099] Starting container [kube-controller-manager] on host [167.172.114.10], try #1 INFO[0099] [controlplane] Successfully started [kube-controller-manager] container on host [167.172.114.10] INFO[0099] [healthcheck] Start Healthcheck on service [kube-controller-manager] on host [167.172.114.10] INFO[0104] [healthcheck] service [kube-controller-manager] on host [167.172.114.10] is healthy INFO[0104] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0104] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0104] [controlplane] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0104] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0105] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0105] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0105] Starting container [kube-scheduler] on host [167.172.114.10], try #1 INFO[0105] [controlplane] Successfully started [kube-scheduler] container on host [167.172.114.10] INFO[0105] [healthcheck] Start Healthcheck on service [kube-scheduler] on host [167.172.114.10] INFO[0110] [healthcheck] service [kube-scheduler] on host [167.172.114.10] is healthy INFO[0110] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0110] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0110] [controlplane] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0110] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0110] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0110] [controlplane] Successfully started Controller Plane.. INFO[0110] [authz] Creating rke-job-deployer ServiceAccount INFO[0110] [authz] rke-job-deployer ServiceAccount created successfully INFO[0110] [authz] Creating system:node ClusterRoleBinding INFO[0110] [authz] system:node ClusterRoleBinding created successfully INFO[0110] [authz] Creating kube-apiserver proxy ClusterRole and ClusterRoleBinding INFO[0110] [authz] kube-apiserver proxy ClusterRole and ClusterRoleBinding created successfully INFO[0110] Successfully Deployed state file at [./cluster.rkestate] INFO[0110] [state] Saving full cluster state to Kubernetes INFO[0111] [state] Successfully Saved full cluster state to Kubernetes ConfigMap: full-cluster-state INFO[0111] [worker] Building up Worker Plane.. INFO[0111] Checking if container [service-sidekick] is running on host [167.172.114.10], try #1 INFO[0111] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0111] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0111] [sidekick] Sidekick container already created on host [167.172.114.10] INFO[0111] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0111] Starting container [kubelet] on host [167.172.114.10], try #1 INFO[0111] Starting container [nginx-proxy] on host [159.65.106.35], try #1 INFO[0111] Starting container [nginx-proxy] on host [159.65.102.101], try #1 INFO[0111] [worker] Successfully started [kubelet] container on host [167.172.114.10] INFO[0111] [healthcheck] Start Healthcheck on service [kubelet] on host [167.172.114.10] INFO[0111] [worker] Successfully started [nginx-proxy] container on host [159.65.106.35] INFO[0111] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0111] [worker] Successfully started [nginx-proxy] container on host [159.65.102.101] INFO[0111] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0111] Starting container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0111] Starting container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0111] [worker] Successfully started [rke-log-linker] container on host [159.65.106.35] INFO[0111] Removing container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0111] [worker] Successfully started [rke-log-linker] container on host [159.65.102.101] INFO[0111] Removing container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0111] [remove/rke-log-linker] Successfully removed container on host [159.65.106.35] INFO[0111] Checking if container [service-sidekick] is running on host [159.65.106.35], try #1 INFO[0111] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0111] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.106.35] INFO[0112] [remove/rke-log-linker] Successfully removed container on host [159.65.102.101] INFO[0112] Checking if container [service-sidekick] is running on host [159.65.102.101], try #1 INFO[0112] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0112] Starting container [kubelet] on host [159.65.106.35], try #1 INFO[0112] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.102.101] INFO[0112] Starting container [kubelet] on host [159.65.102.101], try #1 INFO[0112] [worker] Successfully started [kubelet] container on host [159.65.106.35] INFO[0112] [healthcheck] Start Healthcheck on service [kubelet] on host [159.65.106.35] INFO[0112] [worker] Successfully started [kubelet] container on host [159.65.102.101] INFO[0112] [healthcheck] Start Healthcheck on service [kubelet] on host [159.65.102.101] INFO[0116] [healthcheck] service [kubelet] on host [167.172.114.10] is healthy INFO[0116] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0116] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0116] [worker] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0116] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0116] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0116] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [167.172.114.10] INFO[0116] Starting container [kube-proxy] on host [167.172.114.10], try #1 INFO[0117] [worker] Successfully started [kube-proxy] container on host [167.172.114.10] INFO[0117] [healthcheck] Start Healthcheck on service [kube-proxy] on host [167.172.114.10] INFO[0117] [healthcheck] service [kubelet] on host [159.65.106.35] is healthy INFO[0117] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0117] [healthcheck] service [kubelet] on host [159.65.102.101] is healthy INFO[0117] Starting container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0117] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0117] Starting container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0117] [worker] Successfully started [rke-log-linker] container on host [159.65.106.35] INFO[0117] Removing container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0117] [worker] Successfully started [rke-log-linker] container on host [159.65.102.101] INFO[0117] Removing container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0118] [remove/rke-log-linker] Successfully removed container on host [159.65.106.35] INFO[0118] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.106.35] INFO[0118] Starting container [kube-proxy] on host [159.65.106.35], try #1 INFO[0118] [remove/rke-log-linker] Successfully removed container on host [159.65.102.101] INFO[0118] Image [rancher/hyperkube:v1.17.6-rancher2] exists on host [159.65.102.101] INFO[0118] Starting container [kube-proxy] on host [159.65.102.101], try #1 INFO[0118] [worker] Successfully started [kube-proxy] container on host [159.65.106.35] INFO[0118] [healthcheck] Start Healthcheck on service [kube-proxy] on host [159.65.106.35] INFO[0118] [worker] Successfully started [kube-proxy] container on host [159.65.102.101] INFO[0118] [healthcheck] Start Healthcheck on service [kube-proxy] on host [159.65.102.101] INFO[0122] [healthcheck] service [kube-proxy] on host [167.172.114.10] is healthy INFO[0122] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0122] Starting container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0122] [worker] Successfully started [rke-log-linker] container on host [167.172.114.10] INFO[0122] Removing container [rke-log-linker] on host [167.172.114.10], try #1 INFO[0122] [remove/rke-log-linker] Successfully removed container on host [167.172.114.10] INFO[0123] [healthcheck] service [kube-proxy] on host [159.65.106.35] is healthy INFO[0123] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0123] Starting container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0123] [healthcheck] service [kube-proxy] on host [159.65.102.101] is healthy INFO[0123] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0123] Starting container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0123] [worker] Successfully started [rke-log-linker] container on host [159.65.106.35] INFO[0123] Removing container [rke-log-linker] on host [159.65.106.35], try #1 INFO[0124] [remove/rke-log-linker] Successfully removed container on host [159.65.106.35] INFO[0124] [worker] Successfully started [rke-log-linker] container on host [159.65.102.101] INFO[0124] Removing container [rke-log-linker] on host [159.65.102.101], try #1 INFO[0124] [remove/rke-log-linker] Successfully removed container on host [159.65.102.101] INFO[0124] [worker] Successfully started Worker Plane.. INFO[0124] Image [rancher/rke-tools:v0.1.56] exists on host [167.172.114.10] INFO[0124] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.106.35] INFO[0124] Image [rancher/rke-tools:v0.1.56] exists on host [159.65.102.101] INFO[0124] Starting container [rke-log-cleaner] on host [167.172.114.10], try #1 INFO[0124] Starting container [rke-log-cleaner] on host [159.65.106.35], try #1 INFO[0124] Starting container [rke-log-cleaner] on host [159.65.102.101], try #1 INFO[0124] [cleanup] Successfully started [rke-log-cleaner] container on host [167.172.114.10] INFO[0124] Removing container [rke-log-cleaner] on host [167.172.114.10], try #1 INFO[0124] [cleanup] Successfully started [rke-log-cleaner] container on host [159.65.106.35] INFO[0124] Removing container [rke-log-cleaner] on host [159.65.106.35], try #1 INFO[0125] [remove/rke-log-cleaner] Successfully removed container on host [167.172.114.10] INFO[0125] [cleanup] Successfully started [rke-log-cleaner] container on host [159.65.102.101] INFO[0125] Removing container [rke-log-cleaner] on host [159.65.102.101], try #1 INFO[0125] [remove/rke-log-cleaner] Successfully removed container on host [159.65.106.35] INFO[0125] [remove/rke-log-cleaner] Successfully removed container on host [159.65.102.101] INFO[0125] [sync] Syncing nodes Labels and Taints INFO[0125] [sync] Successfully synced nodes Labels and Taints INFO[0125] [network] Setting up network plugin: canal INFO[0125] [addons] Saving ConfigMap for addon rke-network-plugin to Kubernetes INFO[0125] [addons] Successfully saved ConfigMap for addon rke-network-plugin to Kubernetes INFO[0125] [addons] Executing deploy job rke-network-plugin INFO[0130] [addons] Setting up coredns INFO[0130] [addons] Saving ConfigMap for addon rke-coredns-addon to Kubernetes INFO[0130] [addons] Successfully saved ConfigMap for addon rke-coredns-addon to Kubernetes INFO[0130] [addons] Executing deploy job rke-coredns-addon INFO[0135] [addons] CoreDNS deployed successfully INFO[0135] [dns] DNS provider coredns deployed successfully INFO[0135] [addons] Setting up Metrics Server INFO[0135] [addons] Saving ConfigMap for addon rke-metrics-addon to Kubernetes INFO[0135] [addons] Successfully saved ConfigMap for addon rke-metrics-addon to Kubernetes INFO[0135] [addons] Executing deploy job rke-metrics-addon INFO[0140] [addons] Metrics Server deployed successfully INFO[0140] [ingress] Setting up nginx ingress controller INFO[0140] [addons] Saving ConfigMap for addon rke-ingress-controller to Kubernetes INFO[0140] [addons] Successfully saved ConfigMap for addon rke-ingress-controller to Kubernetes INFO[0140] [addons] Executing deploy job rke-ingress-controller INFO[0145] [ingress] ingress controller nginx deployed successfully INFO[0145] [addons] Setting up user addons INFO[0145] [addons] no user addons defined INFO[0145] Finished building Kubernetes cluster successfully [root@rancher-01 ~]#

查看生成的kubeconfig配置文件

[root@rancher-01 ~]# cat kube_config_cluster.yml apiVersion: v1 kind: Config clusters: - cluster: api-version: v1 certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN3akNDQWFxZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFTTVJBd0RnWURWUVFERXdkcmRXSmwKTFdOaE1CNFhEVEl3TURZeE1qQTROVFF3T0ZvWERUTXdNRFl4TURBNE5UUXdPRm93RWpFUU1BNEdBMVVFQXhNSAphM1ZpWlMxallUQ0NBU0l3RFFZSktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ0VCQU5NU3FXQWRkSjVECjRxQ0NMWXRHVWY4SjdIdUIvVlpaYldLckY4M3NZSzVaaEFsK0VrRnlhWFUwWU9aZGdHQldTZDVoMDVNa0VJQmkKZEdFK1gxWDd5RVM1R0NUMGUxYTU2Z1hXMXljQUxiYzVxZUxSQmtpV2p5b09KT0tJSHJBWGxOZVpQcHA4Tm9oYgpuOG50c29BaHc2TVcySjRERWQ2L2lZcGUxMkl4QlhQVVE1R1Y3aE5SV3k3WHVoQ2NDclRDQTRrNmVlakNTdTFrCnYyRUxUMTZ1ekc5OStwUFM2MElRd25FTjFzOFV2Rms2VU1ZVzFPTnFQbXBGdE52M0hmTkZ3OGpHVWh5MXZKeEgKVm9Ed2JqUmZQUHlabERvSmFETXBnSFpxSlhTSlFvaHkxaVk3aXBuaHh6L1VHMExyMmZZZk4vZEhDeUJHOWp4NAplM1JwMGRxTWFOVUNBd0VBQWFNak1DRXdEZ1lEVlIwUEFRSC9CQVFEQWdLa01BOEdBMVVkRXdFQi93UUZNQU1CCkFmOHdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQlJUUVJVSDRSM2tPVEJLQVJZTkV4YW1VZ3BqQjNDNmVFZGgKaVdOU2lJU0FnMHlSUnlLakpIb21ibUpjRHA3UVAxUWtnamg5a3Z3TnpOU1EwQ3NaRmVMaUpJVHc3U3l0d1huaApmZmtQUnpTTXB2ZVpzZ0RVWExadlkzQjM3bFpCSDhSR0xNQTlYRVZRVUdUcDBSWTFqNE5VN2llaUJycDRveG42CnZNajQra0xocE9pZUJjQlZ6MXVLN0Vkbk5uRTZvcVFNemkzNHBNbDJHT3dWbEY1UVVYcVRNTTJoM244MU04WEcKdFpDOFY2RTlzSXZsTjFkT2tPTEpYWnIwQnI1aGdpbVNCd0xKbks3WlhRTkFjL2pzb3FCa3BEM2ZwWG1OUzFNaQpQWnJqb0t6RWlhclVwNXZsQndFY2pRUDI3TlNQOWVROGkzV2dpVE85VEd6djQ4SzdiOUU9Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K server: "https://167.172.114.10:6443" name: "local" contexts: - context: cluster: "local" user: "kube-admin-local" name: "local" current-context: "local" users: - name: "kube-admin-local" user: client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUM2VENDQWRHZ0F3SUJBZ0lJSEN2MzNVd2FnWTB3RFFZSktvWklodmNOQVFFTEJRQXdFakVRTUE0R0ExVUUKQXhNSGEzVmlaUzFqWVRBZUZ3MHlNREEyTVRJd09EVTBNRGhhRncwek1EQTJNVEF3T0RVME1EbGFNQzR4RnpBVgpCZ05WQkFvVERuTjVjM1JsYlRwdFlYTjBaWEp6TVJNd0VRWURWUVFERXdwcmRXSmxMV0ZrYldsdU1JSUJJakFOCkJna3Foa2lHOXcwQkFRRUZBQU9DQVE4QU1JSUJDZ0tDQVFFQXFMdjUvTDYxdG5ybitZV2VNMDlDWnJNeEI5NkEKSVdSZFQ5M2poNTdYaXdsb0Jtd3NsOStYLzdmZnBGTzZYcXV1QUVDWW4zZEJ2WnMvc256R1I5YUl2NXhpZ1pxRgpDZ0ZCakpsNjE0UVB3N0FGYVJDUTRyMTlxTEdEUS9EMmhhV25YQm4rZU5pNlZsRXlFNVU0cEttVUM1U2FITUdXCmRRR0h2MTZ4bmdyQVllb2gwRzRCbmErV0wyNDNybG5DNVROZ2QwOUJRV2V5Vng5SUppZ3hzcCtkTEMyM2J2MUkKS1VIM0VwV0hJNGFLK05CeWN2SzRMUU9jRUVlWEZuTnRDUmZ3ZkVNeThVbTAwQUZiZG90OGpHajhYTzhlYzlpRgplT21pbUhXZFdDa01uZHJiNDFtSWU3MEVKUGZwM0FxVmRTMkg4azd3MWxaa2NzVkNBa2psbWpYZVlRSURBUUFCCm95Y3dKVEFPQmdOVkhROEJBZjhFQkFNQ0JhQXdFd1lEVlIwbEJBd3dDZ1lJS3dZQkJRVUhBd0l3RFFZSktvWkkKaHZjTkFRRUxCUUFEZ2dFQkFKTnNFaUhta0tPVnpnSVJWOEdSRTZqL2lGQ1lWSzVIakVtR0YzTk9KcUhBNUVLZAo0SDVRZWFubTBuRUpzOFVYdithSUhNcTZ3QjRBc3c5MnJsdnV5NUxIZVNJbVN6UCtVbTdqT0hYZGdjK3d2TXI3Cmt6L1VuT3FPNlJPQ3JUZ1Rod1ZtbHYvNTRxTTZJTkI3aWI1YzNZRlRFU2lJbHdxM05KYU1rMDV6QWp6N3lPM3YKaXdDQ1U0ckJRa2l4MGVQVFlLREJYV1lNOFpUakhLby9TT2JYRFBFRTFVYWFnM2FsMU4xUXNiWUcrYlk2ZWt0VQpSdkpxV0lJNTE5Um5kVWxGMW9zaGNySVJRYlFTSll0S0E5clJhVEZ6SUpIOVR5dldJeXcrSHUrYUpBdkpJdTRnCmIvMkpBUzFHZ0orcjQwc1lqL3o1d04xMHBXWVgyS1RTMWxrVUlnYz0KLS0tLS1FTkQgQ0VSVElGSUNBVEUtLS0tLQo= client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBcUx2NS9MNjF0bnJuK1lXZU0wOUNack14Qjk2QUlXUmRUOTNqaDU3WGl3bG9CbXdzCmw5K1gvN2ZmcEZPNlhxdXVBRUNZbjNkQnZacy9zbnpHUjlhSXY1eGlnWnFGQ2dGQmpKbDYxNFFQdzdBRmFSQ1EKNHIxOXFMR0RRL0QyaGFXblhCbitlTmk2VmxFeUU1VTRwS21VQzVTYUhNR1dkUUdIdjE2eG5nckFZZW9oMEc0QgpuYStXTDI0M3JsbkM1VE5nZDA5QlFXZXlWeDlJSmlneHNwK2RMQzIzYnYxSUtVSDNFcFdISTRhSytOQnljdks0CkxRT2NFRWVYRm5OdENSZndmRU15OFVtMDBBRmJkb3Q4akdqOFhPOGVjOWlGZU9taW1IV2RXQ2tNbmRyYjQxbUkKZTcwRUpQZnAzQXFWZFMySDhrN3cxbFprY3NWQ0FramxtalhlWVFJREFRQUJBb0lCQUJyVjRwbE8zMm1KUEpHVApyYWh0WjVzYnpxVjR2cG9RODBJN2dPOVYxT1A0K0FGbGZPWWVtbmNDRUdCN0xIM1lBaEZxTkp2UUJMV2FGbFJWCndkYzFDSVNvNDRYSFJIZGw0YjN4dnZhOXV5QWRRNDhGSW5YZE96bjBHWE5aeEd0WEFEb0dyRkVkN3V6QmR4eGsKTkNFRUUxYVFLTDZBRDJUR2ZJZDBFUDJZcWlZb0syRjFwSGJ3N1FPNGhkcXdpWWRwK2xzcDZDQTd0NGpMTnpjeApkaFZHWkE4eHFpdU9MUndmTk85RXhFN1FyTmZtcGpWcHA2di93Q3FpMkdPWGdHVnp3WUtqWm1Yc2NxclltazN6CjZ5RjNXQVJLTDNsQTk0WWxnYTdHaTAzL0tDdS9CMXFoMVJKRU1wcFhva3RXNVJRdStxNU82aG92ZjNONTlOWkYKdlFmNU10MENnWUVBelljM0dMNk5OQmRGVVlpWDRHK21SM2FzNVU5QkdmcWpnSE1YMWtWZXlIZUc2WFlHT29iawpWSHdxU3pReE95VS9JcFRKeHNIR3RKZ2ZkOU1ncXd3bDloSTBpc3pUT2M5bkxlckVLZXdpdG9yejU2USthVEY5CjNGSjhBTExPOTZxVEk5WkxYV3BSRnZZL2lJMlJZRHZYemJybFZ1ZDkzRzhkUFoydUE0YkFtL2NDZ1lFQTBpdXEKdmRPSUtsTXFzOUVQcWdUZ2lZbXRzd0x1Q1djL1ZjWnpWTm5UZWcrYnBSajFxVmdWMTNyOTB6RTAyYmtCU0g5NgorWlRvWEdVbGEzY1p4c0VKZStwRXFtc3RpMDI5NzJTUzI3ZHNodXFEU2NrdVJLM2RUTW1SVXRubXR1SkJJbFhHCnJhSGJ6aXhVL1lwR1o4VEtpdzFaYmhiS3ZLWTNUSGxlbWxET1VtY0NnWUJlVmY3N0U1TjZZbWdGeVgxMG5hcWoKeUp3SlVMeGY4VVFVMUQ4UHNaMlV4QkFmbm5XemJYRG1PbXVyUXhTSndrbmRWSS9jODlxQjBBVTVtYVczL1FaNwprTldmRSs2cjdUKzl1ckU1VU5LS0dQTmswbVYzSVNsVTlHTklhc3BHc1h1Q0NuMWpMa1owRktrS3czZ0R4TlFECjhSSU5Ob24xb09hNS9tTDk2VjhFOXdLQmdRQ1JXa3ZxbnZwRU0yS01IQ0ZXTjZ0RzArWkNzTnNKdTlOTXNrUWYKUWNzRlZ2Z1JGWk1JL0hlV29HUWRoS0dGbG5LeHZpREJyZCtKenhZekhackJIODQ4V2dnRlNMeWw1QzFnL0ZDcApEbEZMZWJNMCs2TTVNbm1qMnAvY0NnR0xLQzFkM3E3YWROKzgxbUl0TzAxNEJOMERrRWJ5WVdielU0MVpJWE54CkRFTzFMd0tCZ0FpNkhWblZTN3NyZFYrTnRGTk1FMXl4b1g2S2svZ09VZ2ZESzduN2kzL1dWUWFSTGw0Umh4aTUKbzljN0xTbmZFRXptQUhxR0RmNUE4a2hDR01tZ0xyNnZQbkV3bXNDMmo4ankvRnZIMkpPdnp1QW02NFNUM1IvUQpkUktZVXZhT0ZDc3J4bjZiVVdFZnl3L1ZDeDJPWlZmU1AwOHp5Ymx6TDJQWUhWclFHMjAyCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==[root@rancher-01 ~]#

安装kubectl二进制工具

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg EOF yum install -y kubectl-1.17.6

查看版本信息

[root@rancher-01 ~]# kubectl version --client Client Version: version.Info{Major:"1", Minor:"17", GitVersion:"v1.17.6", GitCommit:"d32e40e20d167e103faf894261614c5b45c44198", GitTreeState:"clean", BuildDate:"2020-05-20T13:16:24Z", GoVersion:"go1.13.9", Compiler:"gc", Platform:"linux/amd64"} [root@rancher-01 ~]#

查看集群节点信息

[root@rancher-01 ~]# kubectl --kubeconfig kube_config_cluster.yml get nodes -o wide NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME rancher-01 Ready controlplane,etcd 12m v1.17.6 10.138.218.141 <none> CentOS Linux 7 (Core) 3.10.0-957.27.2.el7.x86_64 docker://18.9.9 rancher-02 Ready worker 12m v1.17.6 10.138.218.144 <none> CentOS Linux 7 (Core) 3.10.0-957.27.2.el7.x86_64 docker://18.9.9 rancher-03 Ready worker 12m v1.17.6 10.138.218.146 <none> CentOS Linux 7 (Core) 3.10.0-957.27.2.el7.x86_64 docker://18.9.9 [root@rancher-01 ~]#

查看集群组件状态信息

[root@rancher-01 ~]# kubectl --kubeconfig kube_config_cluster.yml get cs NAME STATUS MESSAGE ERROR controller-manager Healthy ok scheduler Healthy ok etcd-0 Healthy {"health":"true"} [root@rancher-01 ~]#

查看命名空间列表

[root@rancher-01 ~]# kubectl --kubeconfig kube_config_cluster.yml get namespace NAME STATUS AGE default Active 16m ingress-nginx Active 15m kube-node-lease Active 16m kube-public Active 16m kube-system Active 16m [root@rancher-01 ~]#

查看kube-system命名空间下Pods状态信息

[root@rancher-01 ~]# kubectl --kubeconfig kube_config_cluster.yml get pods --namespace=kube-system -o wide NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES canal-dgt4n 2/2 Running 0 17m 10.138.218.146 rancher-03 <none> <none> canal-v9pkx 2/2 Running 0 17m 10.138.218.141 rancher-01 <none> <none> canal-xdg2l 2/2 Running 0 17m 10.138.218.144 rancher-02 <none> <none> coredns-7c5566588d-d9pvd 1/1 Running 0 17m 10.42.0.3 rancher-03 <none> <none> coredns-7c5566588d-tzkvn 1/1 Running 0 16m 10.42.2.4 rancher-02 <none> <none> coredns-autoscaler-65bfc8d47d-8drw8 1/1 Running 0 17m 10.42.2.3 rancher-02 <none> <none> metrics-server-6b55c64f86-tmbpr 1/1 Running 0 16m 10.42.2.2 rancher-02 <none> <none> rke-coredns-addon-deploy-job-nt4pd 0/1 Completed 0 17m 10.138.218.141 rancher-01 <none> <none> rke-ingress-controller-deploy-job-tnbqq 0/1 Completed 0 16m 10.138.218.141 rancher-01 <none> <none> rke-metrics-addon-deploy-job-t4jrv 0/1 Completed 0 17m 10.138.218.141 rancher-01 <none> <none> rke-network-plugin-deploy-job-fk8tc 0/1 Completed 0 17m 10.138.218.141 rancher-01 <none> <none> [root@rancher-01 ~]#

Rancher关于Kubernetes 集群节点的角色定义

https://rancher.com/docs/rancher/v2.x/en/cluster-provisioning/production/nodes-and-roles/

https://kubernetes.io/docs/concepts/overview/components/

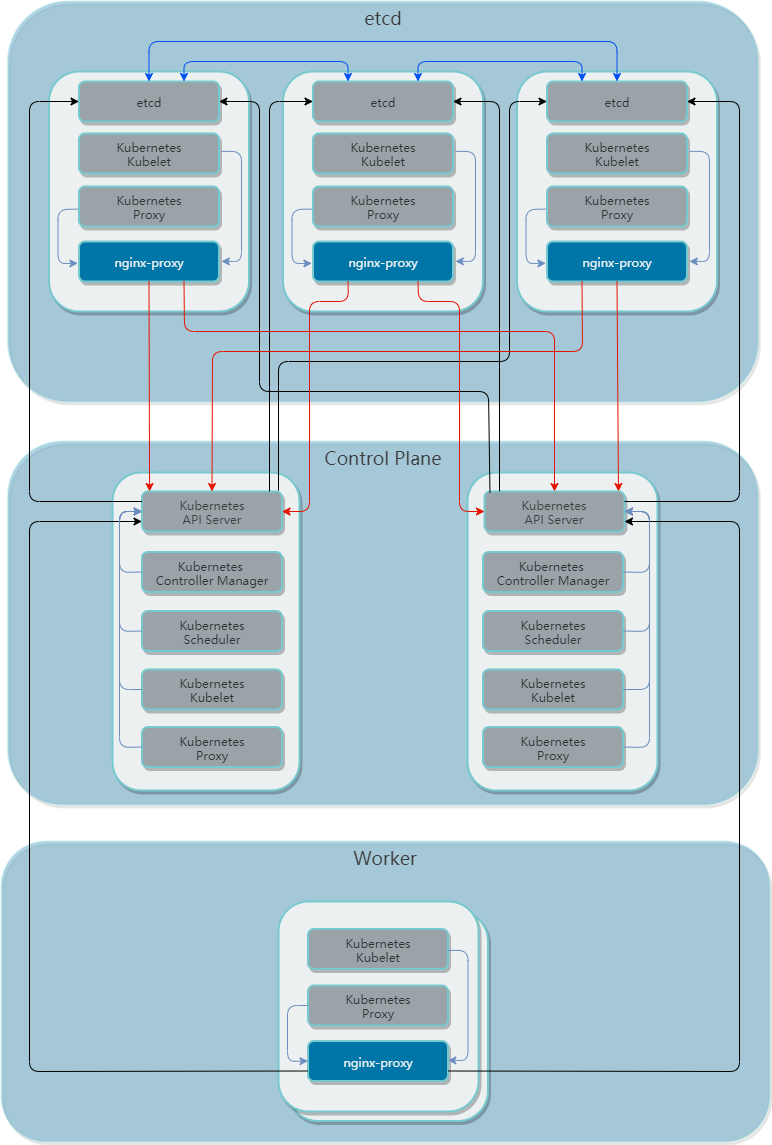

etcd

具有etcd角色的节点运行etcd,这是一个用于存储Kubernetes集群配置数据,具有一致性且高可用的键值存储服务。 etcd将数据复制到每个节点。

注意:在用户界面中,具有etcd角色的节点显示为“Unschedulable”,这意味着默认情况下不会将Pod调度到这些节点。

controlplane

具有controlplane角色的节点运行Kubernetes主组件(不包括etcd,因为它是单独的角色)。 有关组件包括kube-apiserver,kube-scheduler,kube-controller-manager和cloud-controller-manager。

注意:在用户界面中,具有controlplane角色的节点显示为“Unschedulable”,这意味着默认情况下不会将Pod调度到这些节点。

worker

具有worker角色的节点运行Kubernetes节点组件。 有关组件包括kubelet,kube-proxy,Container runtime。